The QA Plugin is awesome. No, really! But that may be hard to believe until you’ve seen one of the reports (displays best in FireFox). After the “more” is a breakdown of the report, which now includes the word counts and an element count pie chart, in addition to the terminology and markup checks.

The QA Plugin is awesome. No, really! But that may be hard to believe until you’ve seen one of the reports (displays best in FireFox). After the “more” is a breakdown of the report, which now includes the word counts and an element count pie chart, in addition to the terminology and markup checks.

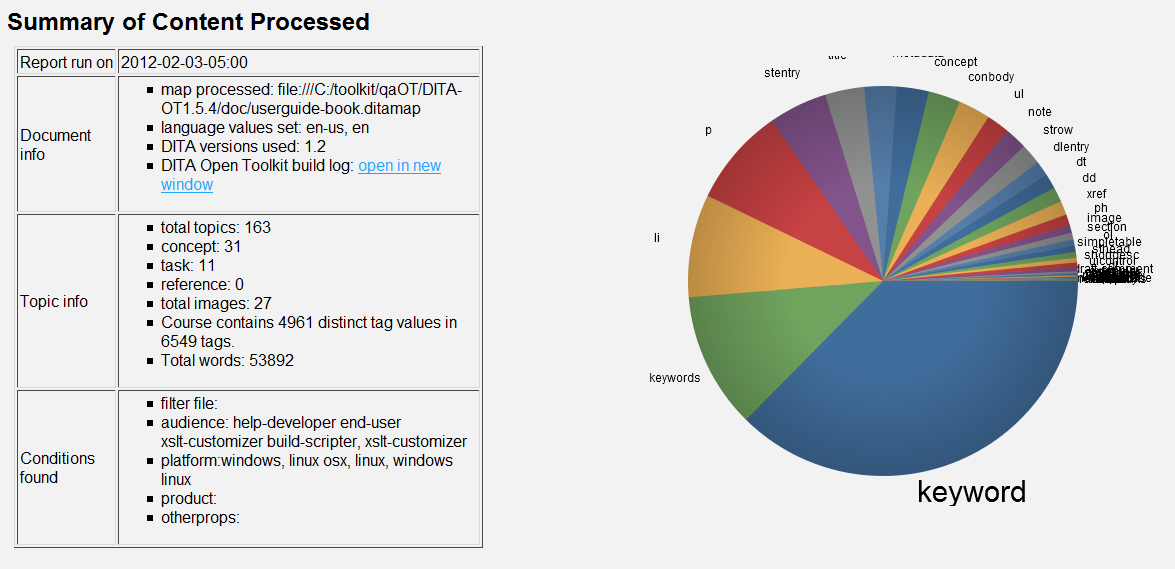

This report was run against the DITA User’s Guide.

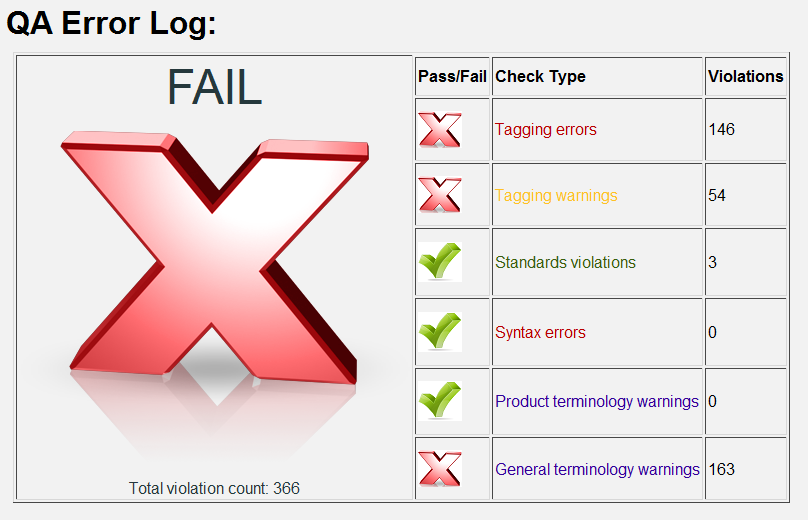

The report generates a per-check-type pass or fail based on the number of errors found. Syntax errors, for example, generate a fail if just one is found. Terminology errors, however, do not generate a fail until 10 are found (see line 202 of qascript.xsl). There is also an overall pass/fail calculated from the individual ones (qascript.xsl line 111). As you can see, the DITA User Guide does not fare well against the default plugin – but we shouldn’t expect it to. The plugin needs to be customized for a particular group’s needs.

The document summary provides a variety of useful information about the DITA document, including a total word count (new!), a pie chart that shows how often each element is used (new!), which conditions have been applied, and a bunch of other stuff. This information, especially the conditions, are useful for troubleshooting successful builds. That may sound counter-intuitive, but many times a build may come out successful, but still have issues with conditions, or language codes, or a host of other things that may be valid DITA, but not correct.

The chart, by the way, leverages the JavaScript InfoVis Toolkit, or Jit for short. This is the first chart we’ve added, but we have additional charts in the works. As of now, the pie chart shows every element used, which means the labels can get a bit jumbled. We thought about limiting it to the top ten elements, but decided we needed to include all of them so that any surprise elements are revealed. Just hover over a slice to see the element count.

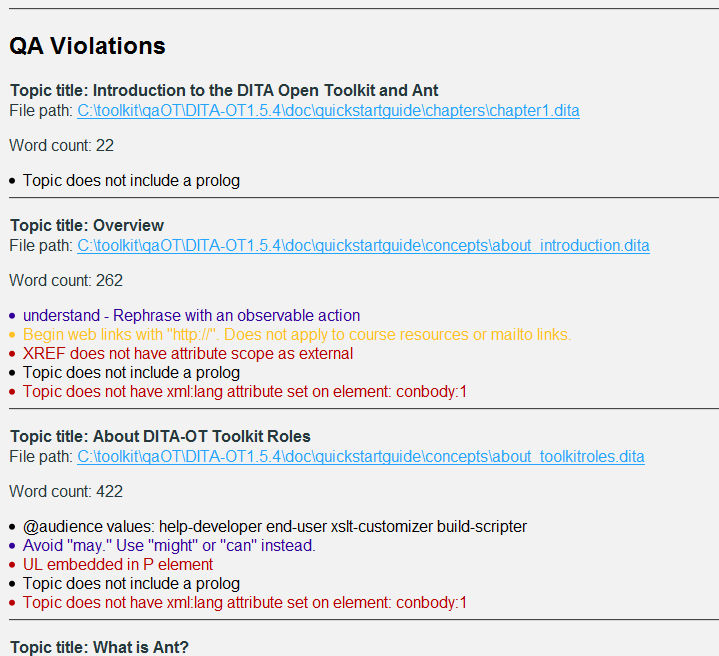

Finally, the report lists errors, conditions, and word counts (new!) per-topic. This is where the work gets done–authors can review this section and make corrections in the corresponding DITA files. The issues, especially terminology / language warnings, should be reviewed, of course. The QA plugin is pretty good, but it is not a natural language processor, so it may generate false positives.

We have more plans around word counts too. We’re thinking we can add a target word count that represents the ideal topic size. Then we can flag topics that are over or under the ideal size, perhaps by 10% or more.

We’d love to hear more ideas for what to include in the report.

[Update: I just added @rev and @status values to the per-topic section.]

I’d like to see a lot more robust set up documentation. I’m trying to use this with Oxygen, but it’s not clear how to set up the plugin to run. For example, when trying to run qascript.xsl, I get an error that “Function key must have 2 arguments”. It’s not clear what needs to be passed in for BASEDIR, fileroot, FILTERFILE, and input.

When trying to run the ant target, I also get :

ant -Dargs.input=test.ditamap -Dtranstype=qa -logger=org.apache.tools.ant.XmlLogger -logfile=out/qalog.xml -Douter.control=quiet

“Unknown argument: -logger=org.apache.tools.ant.XmlLogger”

Of course, it would help if I chose the XSLT 2.0 transformer… I do have the styesheet transform working!

ant build still failing:

ant -Dargs.input=test.ditamap -Dtranstype=qa -buildfile build_qa.xml

Buildfile: /Applications/oxygen/frameworks/dita/DITA-OT/plugins/com.citrix.qa/build_qa.xml

BUILD FAILED

Target “build-init” does not exist in the project “dita2qa”. It is used from target “buildqahtml”.

Total time: 4 seconds

lil’ help?

Hi Scott,

build-init is a core DITAOT target used by all the transforms (I believe). Which version of the OT are you using? Do you know if Oxygen runs the integrator before a build?

One thing to try would be to run the transform outside of Oxygen. You should be able to do this (on Win) by:

1. Going to “C:\Program Files\Oxygen XML Editor 13\frameworks\dita\DITA-OT”

2. double-click startcmd.bat

3. type the following command:

ant -Dargs.input=path to .ditamap -Dtranstype=qa

The result will be in the C:\Program Files\Oxygen XML Editor 13\frameworks\dita\DITA-OT\out folder.

You can also try it with the DITA user guide (as long as you set the input map to chunk=”to-content”) with this command:

ant -Dargs.input=doc/userguide-book.ditamap -Dtranstype=qa -Douter.control=quiet

Finally, if using ant causes any issues, you can try the java command:

java -jar lib\dost.jar /transtype:qa /i:doc/userguide-book.ditamap

If all that fails, contact me via email and we’ll get it figured out.

I finally got it working in oxygen! Here’s what I did:

1. Create a new DITA OT transformation scenario with the following settings:

in Advanced tab, Additional arguments: “-Dtranstype=qa”

in Output tab, Base directory: ${cfd}

Output folder: ${cfd}/QA

Excellent! When I saw your previous comments, I started digging through old emails to find how I’d worked this out with another user… *Just* posted similar steps in a new article. I think you can set the transtype on the parameters tab too.

So, now that it’s working, what do you think?

Very impressive detail in the report. It’s going to take me a bit to work through all of the warnings and rules, but I think it will work very well. I’ll pass along any issues or new rule suggestions if we find any!

Thanks again,

–Scott

I know I’ve been negligent in the documentation – my apologies for that. We have a major update coming, and I’ll make sure I do a better job at documenting it.

You’ll need to extract the socure code from the SourceForge SVN project, see the for instructions on how to extract. If you’d like to contribute to the project, click the request to join link on the right side of the page.

I am currently evatauling the Eclipse WTP (Web Tool Platform).I did try XMLBuddy, but found it to be lacking to many features.Will update once I take a more indepth look at WTP.Ethan CaneWeb Developer

Way cool! Some very valid points! I appreciate you penning

this post and also the rest of the site is very good.